A guide to reproducible research papers

A core tenet of science is the ability to independently verify research results. When computations are involved, verifiability implies reproducibility: one should be able to re-run the computations to ensure they get the same results, at which point they may want to start experimenting with variants of the computational methods, feed it different data sets, and so on. This is the motivation behind our work on Guix: we want to empower scientists by providing a tool in support of reproducible computations and experimentation.

This article is a guide to using Guix for reproducible research work: producing research articles with enough information so that anyone, anytime can re-run the computational experiments it describes. Before showing how to get this done with Guix, let’s look at existing practices and see where they fall short.

On the difficulty of sharing computational processes

A citation attributed to Jon Claerbout summarizes the problem:

Published documents are merely the advertisement of scholarship whereas the computer programs, input data, parameter values, etc. embody the scholarship itself.

Authors of research papers often realize that they need to share not only the data and source code but also the software environment they used, somehow. There are two common ways to do that, sometimes used in combination: recording software package names and versions in the paper (in an appendix), and providing a ready-to-use application bundle such as a Docker or virtual machine image.

Recording name/version pairs is appealing. The intuition is that by communicating the names and version numbers of my dependencies, someone can recreate the same environment that I used. However, where should I stop? If it’s an R program, should I only list R packages? What about R itself? Should I include the linear algebra libraries R depends on? And if my code is C/C++, should I include the compiler version number? The C library? Fellow researcher Konrad Hinsen gives this definition:

“Code” is the code you care about. “Environment” is code you don’t care about.

The problem is that all of “the environment” influences the results produced by the code you care about; it’s hard to make a judgment call to decide that some things should be excluded and others not.

So we see articles with software environments descriptions ranging from

“I used Ubuntu 22.05” to long lists of package name/version—in

research domains where R is used a lot, authors often

provide the output of

sessionInfo()

as an appendix—some

even including environment variable definitions! One

obvious issue with those package name/version lists is that they are not

actionable: you’re not going to build and install every single package

version by hand, it’s just not practical. So in the end, they act more

as a hint: if the software behaves differently than what’s described in

the paper, it might be because I’m using a slightly different version

of some dependency.

The second problem is that a name/version pair fails to capture the

complexity of a package dependency graph: it doesn’t tell which build

options were used, whether patches were applied, which optional

dependencies were enabled, and so on.

To address that, the other option is ship the bits: provide a Docker

or virtual machine image of containing the software of interest. This

is what more and more conference Artifact Evaluation Committees have

come to recommend. It sure lets you run the code in the right software

environment, but the cost is high: you can’t tell what code

you’re running. The image is a big binary blob that was produced by a

complex computational process (apt install, pip install, make,

etc.) but usually one cannot map its contents back to source code.

You may object that, if you have the Dockerfile, then it’s fine. It’s

not. Dockerfiles describe a process that is usually not reproducible

since it depends on external resources such as the set of binary

packages distributed by, say, Ubuntu at a given point in time. Even if

it were reproducible, the whole process is fundamentally opaque: it

assembles opaque binaries, starting with a full operating system image

and piling binaries fetched by pip or other tools.

Conversely, Guix is, at its core, about providing a verifiable path from source code to binary. Guix packages are essentially source code that describes how to build software from source.

Our goal in the remainder of this article is to provide a step-by-step guide on using Guix to manage the software environment of your research software.

Executable provenance meta-data

With Guix as the basis of your computational workflow, you can get what’s in essence executable provenance meta-data: it’s like that long list of package name/version pairs, except more precise and immediately deployable. Let’s see how this can be achieved.

Update (2025-10-13): For an updated version of this guide, check out the Guix cookbook.

Step 1: Setting up the environment

The first step will be to identify precisely what packages you need in your software environment. Assuming you have a Python script that uses NumPy, you can start by creating an environment that contains these two packages and try to run your code in that environment:

guix shell -C python python-numpy -- python3 ./myscript.pyThe -C flag here (or --container) instructs guix shell to create

that environment in an isolated container with nothing but the two

packages you asked for. That way, if ./myscript.py needs more than these

two packages, it’ll fail to run and you’ll immediately notice. On some

systems --container is not supported; in that case, you can resort to

--pure instead.

Perhaps you’ll find that you also need Pandas and add it to the environment:

guix shell -C python python-numpy python-pandas -- \

python3 ./myscript.pyIf you fail to guess the name of the package (this one was easy!), try

guix search.

Environments for Python, R, and similar high-level languages are relatively easy to set up. For C/C++ code, you may find need many more packages:

guix shell -C gcc-toolchain cmake coreutils grep sed make openmpi -- …Or perhaps you’ll find that you could just as well provide a definition for your package.

Eventually, you’ll have a list of packages that satisfies your needs.

What if a package is missing? Guix and the main scientific and HPC channels provide about 25,000 packages today. Yet, there’s always the possibility that the one package you need is missing. In that case, you will need to provide a package definition for it in a dedicated channel of yours. For software in Python, R, and other high-level languages, most of the work can usually be automated by using

guix import. Join the friendly Guix community to get help!

Step 2: Recording the environment

Now that you have that guix shell command line with a list of

packages, the best course of action is to save it in a manifest

file—essentially a software bill of materials—that Guix can then

ingest. There are other ways to do

that

but the easiest way to get started is by “translating” your command line

into a manifest:

guix shell python python-numpy python-pandas \

--export-manifest > manifest.scmPut that manifest under version control! From there anyone can redeploy the software environment described by the manifest and run code in that environment:

guix shell -C -m manifest.scm -- python3 ./myscript.pyHere’s what manifest.scm reads:

;; What follows is a "manifest" equivalent to the command line you gave.

;; You can store it in a file that you may then pass to any 'guix' command

;; that accepts a '--manifest' (or '-m') option.

(specifications->manifest

(list "python" "python-numpy" "python-pandas"))It’s a code snippet that lists packages. Notice that there are no version

numbers! Indeed, these version numbers are specified in package definitions,

located in Guix channels. To allow others to reproduce the exact same

environment as the one you’re running, you need to pin Guix itself , by

capturing the current Guix channel commits with guix describe:

guix describe -f channels > channels.scmThis channels.scm file is similar in spirit to “lock files” that some

deployment tools employ to pin package revisions. You should also keep

it under version control in your code, and possibly update it once in a

while when you feel like running your code against newer versions of its

dependencies. With this file, anyone, at any time and on any machine,

can now reproduce the exact same environment by running:

guix time-machine -C channels.scm -- shell -C -m manifest.scm -- \

python3 ./myscript.pyIn this example we rely solely on the guix channel, which provides the

Python packages we need. Perhaps some of the packages you need live in

other channels—maybe guix-cran if you

use R, maybe guix-science. That’s fine: guix describe also captures

that.

Of course do include a README file giving the exact command to run the

code. Not everyone uses Guix so it can be helpful to also provide

minimal non-Guix setup instructions: which package versions are used,

how software is built, etc. As we have seen, such instructions would

likely be inaccurate and inconvenient to follow at best. Yet, it can be

a useful starting point to someone trying to recreate a similar

environment using different tools. It should probably be presented as

such, with the understanding that the only way to get the same

environment is to use Guix.

Step 3: Ensuring long-term source code archival

We insisted on version control before: for the manifest.scm and

channels.scm files, but of course also for your own code. Our

recommendation is to have these two .scm files in the same repository

as the code they’re about.

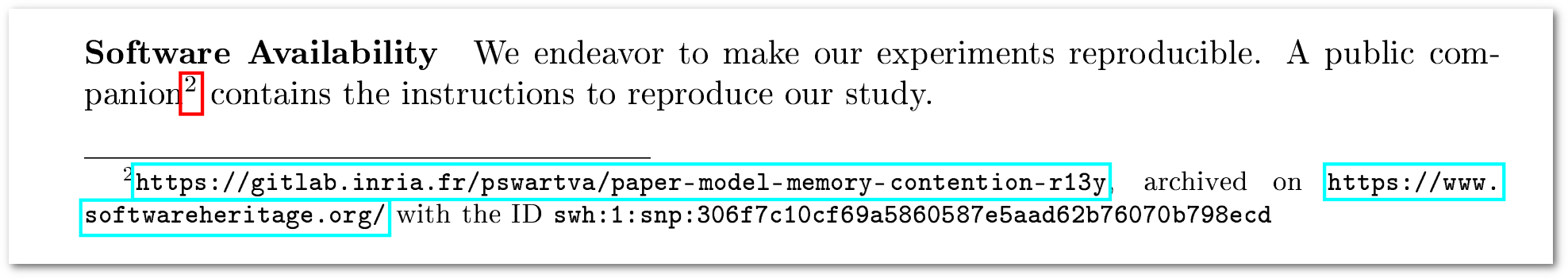

Since the goal is enabling reproducibility, source code availability is a prime concern. Source code hosting services come and go and we don’t want our code to vanish in a whim and render our published research work unverifiable. Software Heritage (SWH for short) is the solution for this: SWH archives public source code and provides unique intrinsic identifiers to refer to it—SWHIDs. Guix itself is connected to SWH to (1) ensure that the source code of its packages is archived, and (2) to fall back to downloading from the SWH archive should code vanish from its original site.

Once your own code is available in a public version-control repository, such as a Git repository on your lab’s hosting service, you can ask SWH to archive it by going to its Save Code Now interface. SWH will process the request asynchronously and eventually you’ll find your code has made it into the archive.

Step 4: Referencing the software environment

This brings us to the last step: referring to our code and software environment in our beloved paper. We already have all our code and Guix files in the same repository, which is archived on SWH. Thanks to SWH, we now have a SWHID, which uniquely identifies the relevant revision of our code.

Following SWH’s own

guide,

we’ll pick an swh:dir kind of identifier, which refers to the

directory of the relevant revision/commit of our repository, and we’ll

keep contextual info for clarity—that includes the original URL.

Putting it all together, we’ll conclude our paper with a sentence along

these lines:

The source code used to produce this study, as well as instructions to run it in the right software environment using GNU Guix, is archived on Software Heritage as

swh:1:dir:cc8919d7705fbaa31efa677ce00bef7eb374fb80;origin=https://gitlab.inria.fr/lcourtes-phd/edcc-2006-redone;visit=swh:1:snp:71a4d08ef4a2e8455b67ef0c6b82349e82870b46;anchor=swh:1:rev:36fde7e5ba289c4c3e30d9afccebbe0cfe83853a.

With this information, the reader can:

- get the source code;

- reproduce its software environment with

guix time-machineand run the code; - inspect and possibly modify both the code and its environment.

Mission accomplished!

Examples

Perhaps you don’t feel adventurous enough to be the first one to follow this methodology. Worry not: you won’t be the first! Here are examples of reproducible papers built along the lines of this guide (with some variations), in several different fields:

- Philippe Swartvagher et al., Tracing task-based runtime systems: feedbacks from the StarPU case. This article studies the impact of tracing complex HPC applications, especially what are the sources of performance degradation when an application execution is traced; evaluates the solutions to reduce the tracing overhead; and explores clock synchronization issues when distributed applications are traced. The paper is still under review but its content is available in Philippe's thesis. Considered applications are C programs using MPI, launched with Slurm, then Python scripts are used to process results and generate plots. The companion repository contains instructions and scripts to reproduce the whole study.

- Emmanuel Agullo, Marek Felšöci, Guillaume Sylvand, A comparison of selected solvers for coupled FEM/BEM linear systems arising from discretization of aeroacoustic problems with the associated technical report describing the experimental environment and providing instructions for reproducing the experiments. Experiments in this study rely on private industrial code and can thus be reproduced only by a limited number of people. However, the publicly available material provides everyone with a fully documented example of building reproducible experimental studies within a constrained industrial context thanks the association of GNU Guix and the literate programming in Org mode.

- Vic-Fabienne Schumann et al., SARS-CoV-2 infection dynamics revealed by wastewater sequencing analysis and deconvolution (preprint). The pipeline used to compute the results shown in the article is made with PIGx, a tool and collection of genomics pipelines that builds upon Guix. The “Data/Code Availability” section links to a repository that contains the manifest and channels files that were used and instructions to run the analysis.

Three contributions to the Ten Years Reproducibility Challenge organized by the ReScience C journal. In each article, the link to the code repository is at the bottom of the first page.

Ludovic Courtès, [Re] Storage Tradeoffs in a Collaborative Backup Service for Mobile Devices, ReScience C 6, 1, #6. This article reproduces the results of a 10-year old article. Experiments in the original article involved a complex software stack and did not use Guix (it actually predates Guix!). The article shows how to come up with a similar software environments a decade later, and how to use Guix to produce a pipeline that goes from source code to PDF.

Konrad Hinsen, [¬Rp] Stokes drag on conglomerates of spheres, ReScience C 6, 1, #7. Tries to reproduce a study in computational fluid dynamics, based on Fortran code published in 1993. Ultimately fails because some of the code was lost, but the surviving code works nicely in a reproducible Guix environment.

Konrad Hinsen, [Rp] Structural flexibility in proteins — impact of the crystal environment, ReScience C 6, 1, #5. Describes the reproduction of a computation of the normal modes of protein crystals, originally done in 2008 using Python scripts that no longer work with modern Python versions. A Guix environment based on the channel guix-past makes it possible to run historical versions of Python and some of its libraries.

Wrap-up

The key takeaways of this guide for reproducible papers are:

- Recording package name/version is often of little help when it comes to running the code; conversely, providing an opaque image makes it easy to run the code but prevents verifiability and experimentation.

- Guix lets you record the software environment with two files:

manifest.scm, which lists software packages, andchannels.scm, which pins Guix and its channels to a specific revision. - A combined command consumes these files and reproduces the exact

same software environment:

guix time-machine -C channels.scm -- shell -m manifest.scm. - With these files and your code under version control and archived on Software Heritage, it’s enough to share one SWHID in your paper.

Here are resources to learn more about this whole process:

- Toward practical transparent verifiable and long-term reproducible research using Guix, Nature Scientific Data article (volume 9, Oct. 2022) by N. Vallet et al.

- Guix as a tool for computational science, talk by K. Hinsen at the Ten Years of Guix event

- Using Guix for scientific, reproducible, and publishable experiments, talk by P. Swartvagher at the same venue

- Archive, reference, describe and cite software source code: a pathway to reproducibility, talk by M. Gruenpeter at the same venue

- Guix and Org mode, a powerful association for building a reproducible research study, a self-contained tutorial by M. Felšöci.

If you’re interested, please join our next Reproducible Research

Hackathon,

which will take place on-line on June 27th, 2023, come to the Workshop

on Reproducible Software Environments in

November 2023, and/or subscribe to the guix-science mailing

list!

Unless otherwise stated, blog posts on this site are copyrighted by their respective authors and published under the terms of the CC-BY-SA 4.0 license and those of the GNU Free Documentation License (version 1.3 or later, with no Invariant Sections, no Front-Cover Texts, and no Back-Cover Texts).